The first wave of healthcare AI was built to answer questions. You ask, it responds, and the interaction ends there. That model works fine for isolated tasks, but healthcare isn’t isolated. It’s continuous, complex, and deeply interconnected.

What healthcare actually needs is something different: an intelligence layer that fits how care is delivered in the real world. Not just systems that process data, but systems that remember what’s happened, explain their decisions, adjust to context, and act before problems escalate.

That shift, from automation to Autonomy comes through something you might call “living intelligence,” an intelligence layer designed to mirror the continuous, adaptive nature of human-centered work. Unlike static AI that responds and resets, living intelligence persists, retaining context across time, reasoning transparently about its decisions, adapting to the specific conditions of each person, process, and regulatory environment, and acting proactively rather than waiting to be asked.

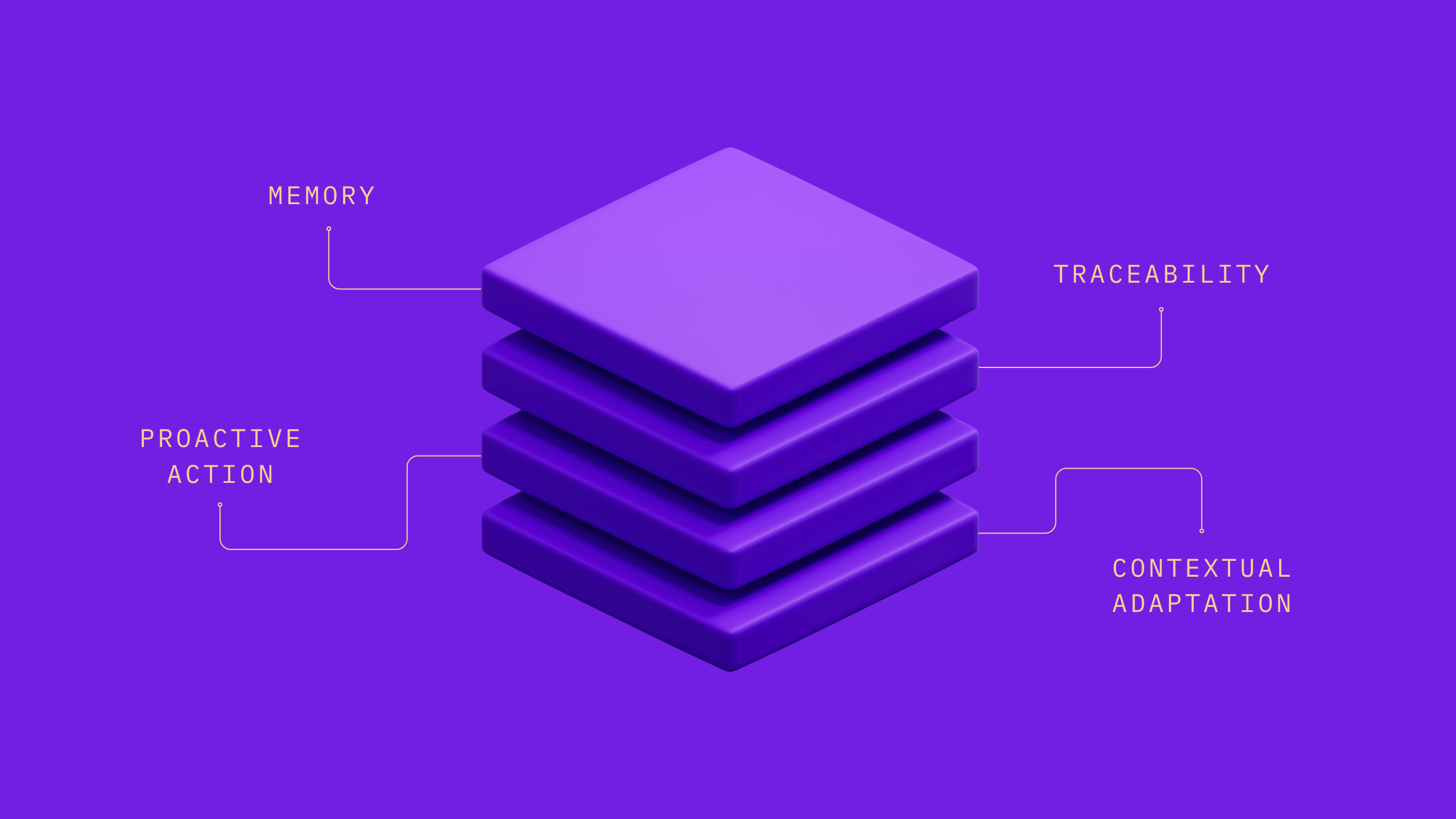

The shift to living intelligence requires organizations to transition from simply implementing automation to adopting four primary principles: Memory, Traceability, Proactive Action, and Contextual Adaptation.

The Four Principles

Memory: context that persists over time

Every interaction in a static AI system starts from zero. There is no thread connecting last week’s assessment to today’s decision, no shared understanding of what was tried, what changed, or what was explicitly ruled out. Each encounter carries its own blank slate, which means every team, every clinician, and every touchpoint is constantly rebuilding context from scratch.

Memory changes that. A system built on this principle retains what has happened, what was decided, and what has changed across people, processes, and time. It knows which interventions have already been attempted. It notices when a patient’s situation has drifted from the last documented status. It carries forward the reasoning that shaped a prior decision so that future decisions can build on it, rather than ignore it.

The practical result: organizations stop losing the thread every time a task closes. The people they serve stop feeling like strangers at every new encounter. Work becomes cumulative rather than repetitive, and the system’s value compounds with every interaction, rather than resetting with every session.

Explainability: decisions that can be audited and trusted

Trust in healthcare AI doesn’t come from accuracy statistics. Clinicians, compliance officers, and care teams need to understand not just what a system recommended, but why. What information was considered, what logic was applied, and where does that logic live so that it can be reviewed and audited.

Explainability means every recommendation or action comes with traceable reasoning. The system doesn’t ask teams to trust a black box. Instead, it opens the box, surfacing the specific inputs used, the criteria weighed, and the rationale behind a given output. That reasoning is auditable, reviewable, and legible to the humans who must ultimately stand behind the decisions.

This matters especially in high-stakes or regulated workflows. When a prior authorization is flagged, a care gap is identified, or a clinical alert is triggered, the people who receive that output need to be able to evaluate it, not just accept it. Explainability makes that possible. It transforms AI from a system that produces outputs into one that produces accountable outputs. And that distinction is the difference between a tool teams tolerate and one they actually rely on.

Contextual Adaptation: Intelligence calibrated to the solution

Not every decision carries the same weight. Routine, low-risk work should move quickly and require minimal friction. Complex clinical situations demand more care: more review, more nuance, more human judgment. High-stakes decisions need to be escalated to the right people, with the right context already surfaced. A system that treats all of these the same isn’t adapting to healthcare; it’s ignoring it.

Contextual adaptation means the intelligence adjusts to the environment it operates in; the organization’s workflows, the applicable regulatory landscape, the risk profile of the situation at hand, and the complexity of the patient or process involved. Critically, it also knows how much autonomy to apply: simple, well-defined tasks move through automatically; borderline cases get flagged for review; edge cases are escalated, with full context already prepared for whoever takes over.

This calibration is what separates useful automation from reckless automation. It’s also what makes AI trustworthy at scale — because the system isn’t just fast, it’s appropriately careful. Routine work gets faster. Complex work gets better support. And the people in the loop receive decisions that are already sized and prepared for their level of authority and expertise.

Anticipation: acting early enough to change outcomes

Most AI in healthcare is reactive. A question is asked and it responds. A task is submitted and it processes. This is useful, but it’s inherently downstream, engaging after something has already happened, often after the moment when intervention would have had the most impact.

Anticipation flips that orientation. Rather than waiting to be asked, a system built on this principle monitors continuously, detects patterns early, and surfaces what matters before it becomes a problem. It notices when a member is trending toward a high-risk state before they reach it. It identifies care gaps before they become claims. It flags a workflow exception before it causes a downstream delay.

The goal isn’t just to make existing processes faster; it’s to reduce how often the most costly, reactive processes are needed in the first place. That’s where the real value lives: not in processing work more efficiently, but in preventing the kind of work that should never have been necessary to begin with. Acting early enough to actually change outcomes is the shift from a system that follows events to one that gets ahead of them.

What this looks like in practice

When these four principles come together, they create a very different kind of AI system: one that remembers what matters, explains its decisions, acts with the right level of responsibility, and steps in early enough to actually change outcomes.

That’s the shift underway. Not just faster automation, but a coordinated intelligence layer that fits the reality of how healthcare works. And this isn’t theoretical; it’s already happening.

Organizations we work with everyday are putting this into practice and seeing meaningful improvements in both efficiency and accuracy. Care management teams are moving through cases much faster, with far less manual effort. Prior authorization workflows that used to take 20–30 minutes can now be completed in seconds. At the same time, manual errors are dropping, and most importantly, thousands of clinical hours are being returned to patient care instead of administrative work.

80%

Faster case review time

95%

Reduction in intake, classification, & extraction time

95%

Increase in clinical team productivity

98%

of AI-generated information accepted by clinicians

At scale, processes that once required significant operational time are now nearly instantaneous, saving millions each year. Clinical trial matching is not only faster, but more complete, helping ensure eligible patients aren’t missed.

These systems are already deployed across several of the largest U.S. health plans, supporting a wide range of workflows including utilization management, care management, claims, and pharmacy. And all of it runs on infrastructure purpose-built for healthcare, so organizations can scale with confidence, without compromising trust.

The Opportunity Ahead

The opportunity in front of healthcare isn’t simply to do the same work faster. It’s to fundamentally rethink how the work gets done and to build systems capable of functioning in the complex world of healthcare.

When AI is designed with memory, explainability, contextual adaptation, and a proactive orientation, it stops behaving like a tool and starts functioning like part of the system itself. It connects what was previously fragmented and brings clarity to decisions that were previously opaque. It helps teams act earlier, when the cost of acting is low and the value of acting is high.

This is how healthcare moves from reactive to proactive, from episodic to continuous, and from manual coordination to intelligent orchestration. The organizations that make this shift won’t just see efficiency gains; they’ll deliver more consistent care, make better decisions at scale, and build systems that actually support the people working inside them.

That’s what living intelligence makes possible. And it’s quickly becoming the standard for what healthcare AI should be.

Learn More

Contact us to learn more about how we can help you turn your data into living intelligence. Request a meeting: https://autonomize.ai/contact-us

Discover the Autonomize Intelligence Platform, a complete foundation for building, deploying, and governing healthcare-native AI Agents at enterprise scale.